When I joined the Joint Special Operations Command (JSOC), Osama bin Laden had been killed in Abbottabad only months earlier.

However, operations to root out terror were still in full swing, and in a dusty plywood building on Bagram Airfield, I witnessed the orchestrated madness that had, by then, evolved into a refined operating model compared to the early days of the War on Terror.

Inside our tactical operations center, a bank of screens bathed the room in an electric glow, pumping in live drone footage of raids and targets moments from contact. In one corner, a small interagency team reviewed human-intelligence reports and tasked clandestine agents through elaborate communication systems. Across the room, geospatial analysts managed customized computers loaded with real-time satellite imagery and telemetry data.

What once felt like chaos had become a synchronized yet flexible ecosystem—fusion in motion, all day, every day, across dozens of small, hidden outposts worldwide. Next to me, SEALs, Rangers, intelligence analysts, Air Force combat controllers, and representatives of every three-letter agency in the Beltway clustered around high-tops, curating the complex world of counterterrorism into one coherent picture.

On any given night, the Task Force would strike a dozen targets in less time than it once took to plan one—because operators on the ground had the trust and authority to turn instant information into insight and insight into action. The technology was dazzling but primitive by today’s standards. The decisive factor was something older: a tightly knit web of small, empowered teams who shared context and moved as one.

Fifteen years later, I found myself in a glass-and-chrome boardroom in Seattle, listening to a senior leader from a Fortune 5 company explain why his year-old generative AI program had stalled. The models produced elegant prose, identified opportunities, and generated eerily accurate product forecasts—yet nothing meaningful reached customers.

“We thought AI would accelerate these types of sales cycles,” he admitted. “But our growth is no better than it was five years ago. Someone still has to decide, commit, and execute.”

The parallels were unmistakable.

Whether in wartime Afghanistan or corporate America, technology accelerates the flow of information; people still turn that information into outcomes.

That is the essence of the Team of Teams philosophy—and it is why, in an era awash with artificial intelligence, human ingenuity matters more than ever.

This is not a playbook of bullet points but an observation: across boardrooms, factory floors, and Zoom screens, the critical unlock for every organization remains the enduring power of small, connected teams—augmented by AI, but never replaced by it.

Roughly 70% of digital-transformation programs fail to deliver on their promises—not because of poor algorithms, but because organizations underestimate the human system required to activate them. McChrystal Group research indicates that employees with cross-functional networks and psychological safety are significantly more likely to successfully adopt AI than those operating in silos.

Technology accelerates information; human connection still determines outcomes.

The Seduction of the Algorithm

My first brush with AI euphoria occurred in 2020, while leading a team of intelligence analysts responsible for synthesizing thousands of reports from every embassy and outpost worldwide into a comprehensive picture of the threats facing the U.S. homeland. It was tedious work. Analysts spent more time locating and organizing reports than reading or understanding them.

Our team set out to use Optical Character Recognition (OCR) and Natural Language Processing (NLP) technologies to ease that burden—freeing analysts to apply their experience and judgment to generate real insight. Our data scientists developed an inference pipeline and a user interface that enabled analysts to direct models toward the appropriate databases and organize their interests by theme and data originator.

The office served as a testbed for the broader analytic community—a pilot through which others would later leverage AI-generated insights once the system proved ready to scale. A year later, the product was live and producing thousands of report summaries. Yet frustration mounted. Despite high demand for current analysis—limited by headcount and narrow specialization—the office was not releasing the reports that the application was designed to produce.

“The model’s fine,” the lead technical developer said, “but our analysts are reluctant to finalize any of the reports; they don’t trust that the models are generating the same analysis they would.”

After significant negotiation with legal offices and the authority responsible for analytic tradecraft, there was still no resolution. The project had hit the institutional equivalent of a dead end: murky governance, siloed communication, and a culture that viewed AI as a magic wand rather than an amplifier of human judgment. That was my first real encounter with what I came to call the Interpretation Gap.

Identify the Interpretation Gap

The space between AI sensemaking and human judgment.

The Interpretation Gap describes the distance between what artificial intelligence can detect and what humans can decide. It’s the space where meaning is made or lost. Leaders fall into this gap when they assume that algorithmic outputs speak for themselves, forgetting that models can generate probabilities but not priorities. Insight still requires interpretation, context, and the courage to act.

AI doesn’t remove human judgment—it magnifies its importance. The models are getting faster, but the real bottleneck remains our ability to understand and apply what they reveal. Bridging that gap isn’t about more computing power; it’s about cultivating the trust, context, and clarity that turn information into action.

Signs of the Interpretation Gap

- Tool-first mindset: Teams fixate on the algorithm instead of the question it’s meant to answer.

- Low adoption: Employees disengage because they don’t see how outputs connect to real decisions.

- Cultural resistance: Teams trust their own intuition over the model and aren’t taught how to reconcile the two.

- Governance paralysis: No one knows who owns interpretation, so insights linger without ownership or execution.

Bridging the Gap

Closing the Interpretation Gap starts with clarity: Who decides what? What context matters most? What risks are acceptable? In one global deployment study, only half of employees felt equipped to use new AI tools effectively, and just one in three were comfortable crafting prompts before training. The problem wasn’t capability, it was confidence.

When leaders create space for dialogue between data and decision, they transform AI from a black box into a common language. The organizations that move fastest are those where small, trusted teams translate machine insight into human understanding, and then act on it together.

The same pattern repeats across industries. In one global deployment study led by McChrystal Group, only half of employees felt equipped to use new AI tools effectively, and just one in three were comfortable crafting prompts before training. The result was predictable: adoption stalled, output plateaued, and teams reverted to old workflows. The code wasn’t broken—the culture was.

One respondent put it bluntly: “It’s meant to save time, but I haven’t yet found the space to learn it.”

Another added, “I could do so much more with it, but I need coaching to show me how.”

AI doesn’t fail technically; it fails behaviorally—when leaders treat it as a shortcut rather than a capability to be cultivated.

Tools improve with every commit; human systems ossify unless leaders tend them like gardens. The analysis office’s codebase was flawless, yet the surrounding ecosystem lacked the connective tissue, shared consciousness, psychological safety, and explicit decision-making rights needed to harness its potential.

Rediscover the Power of the Small Team

The antidote revealed itself in an unlikely place—Boston City Hall during the COVID-19 pandemic. With supply chains in disarray, the Mayor created a “fusion cell” comprising more than 70 agencies and departments, tasked with pivoting the city from in-person operations to a nimble, whole-of-government team determined to mitigate COVID-19’s impact and return the city to normal as quickly as possible.

They met twice daily on Zoom and ran a lightweight Kanban board stitched together with Slack, off-the-shelf collaboration tools, and a touch of AI that drafted summaries of emerging issues overnight for the next morning’s stand-up sync. Because every function sat in the same digital foxhole, bottlenecks surfaced in real time.

Similar network effects are also observed in corporate AI transformations. In one multinational case, McChrystal Group found that 10% of employees accounted for 77% of all AI activity. These “super-users” modeled new behaviors, trained peers, and drove cultural adoption. They became the connective tissue between departments, proving how empowered micro-teams can outpace bureaucracy and scale innovation faster than any central rollout plan.

The lesson was identical to Boston’s fusion cell: tight teams, loose coupling, radical transparency.

When trust and context flow freely, experimentation spreads naturally.

Watching that sprint, I felt the same pulse I’d felt in JSOC—tight teams, loose coupling, autonomy at the edge, paired with radical transparency. AI accelerated background tasks, but the breakthrough came from human debate, not algorithmic suggestion: switching to functionally organized response teams aligned under a single umbrella of city service.

Create Space for Ingenuity

I often remind skeptical CFOs that Toyota’s vaunted kaizen culture did not blossom because robots welded faster. It flourished because leaders carved out time for kata—small, ritualized experiments where frontline operators proposed micro-improvements, tested them during the same shift, and shared lessons at the end of the day. AI promises similar productivity dividends, but leaders face a fork in the road: fill the reclaimed hours with more tasks, or convert them into creative slack where the next idea can germinate. One consumer-electronics client chose the latter.

After a chatbot replaced 40% of tier-one support tickets, the VP of Customer Care resisted the pressure to downsize the team. Instead, she formed “innovation squads” from redeployed agents to mine chat transcripts for unmet customer needs. Within a quarter, those squads surfaced three firmware tweaks that slashed returns by double digits. Automation begat capacity; capacity, properly stewarded, begat innovation.

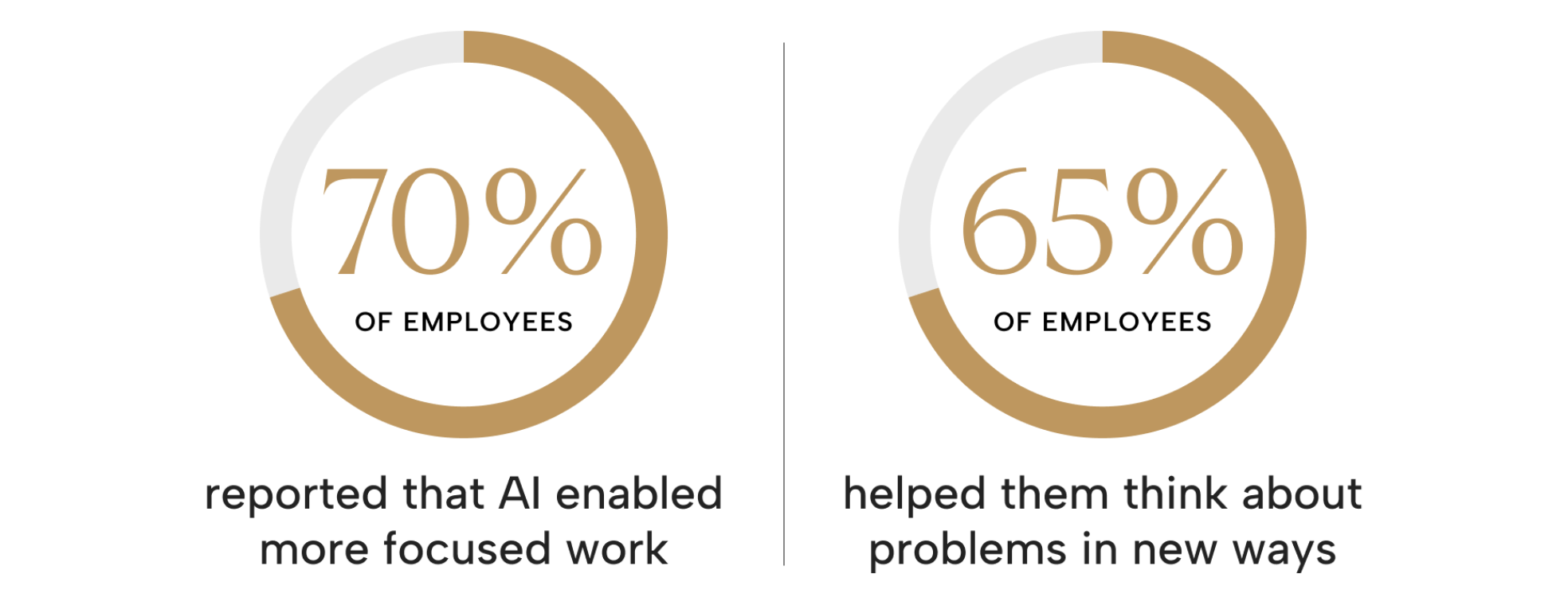

That kind of ingenuity shows up wherever AI is implemented thoughtfully. In research conducted by the McChrystal Group, 70% of employees reported that AI enabled more focused work, and 65% stated that it helped them think about problems in new ways.

However, the same studies warned that unless leaders deliberately protect that reclaimed time for creativity, it quickly fills up with busywork. The most successful organizations treat time saved as strategic oxygen—fuel for learning, experimentation, and problem-solving, rather than just another performance metric.

What Executives Must Do Differently

Across dozens of enterprise studies, one pattern stands out: insight alone doesn’t create value—execution does. In organizations that tied AI usage to measurable business outcomes and continuous feedback, teams gained up to 90 minutes of additional focus time each week and reduced meeting hours by nearly an hour. Where governance, learning loops, and cross-functional communication were weak, those gains vanished.

The differentiator isn’t access to models; it’s the operating rhythm around them.

Patterns accumulate. Across industries and geographies, four leadership behaviors separate AI tinkerers from AI multipliers.

Name the Problem Before Naming the Model.

AI adoption begins where strategy meets specificity. A defined problem is half solved.

The most successful leaders don’t start with algorithms; they start with intent. They can articulate in a single sentence what they’re solving for: “Give frontline merchandisers a same-day view of sell-through risk,” or “Free analysts to spend 70% of their time on insight, not input.”

Across every major enterprise we’ve studied, AI adoption stalls when teams chase tools instead of outcomes.

In one global readiness assessment, only half of employees felt equipped to use AI tools effectively, and just one in three were confident writing meaningful prompts before training. The missing link wasn’t access to technology—it was clarity of purpose.

When leaders define the “why” with precision, it cascades through every layer of the organization. It provides data scientists with focus, sets boundaries for governance teams, and gives operators the context for making informed judgment calls. Clarity doesn’t slow innovation—it accelerates it by channeling experimentation toward shared objectives.

Build Micro-Teams Around Value Streams.

The future belongs to organizations that think in squads, not silos.

AI success doesn’t come from more committees; it comes from smaller, cross-functional teams that move faster than bureaucracy. We’ve seen this pattern repeat: roughly 10% of users account for nearly 80% of total AI value creation. Those “super-users” aren’t coders or executives—they’re bridge-builders who connect marketing, finance, and operations into outcome-focused squads.

These micro-teams become the organization’s neural network, linking functions, sharing prompts, and redistributing what works. It’s the corporate equivalent of the “fusion cells” that transformed counterterrorism operations in Afghanistan and city government responses during the pandemic: tight teams, loose coupling, and radical transparency.

The structure matters less than the behaviors inside it: daily syncs, open dashboards, visible metrics, and distributed decision rights. When AI becomes a shared toolset rather than a departmental trophy, the organization learns and scales as one.

Patent the Learning Loop.

The organizations that learn fastest will outpace those that code best.

Every AI initiative should operate like a perpetual after-action review: What did we try? What happened? What did we learn, and how will we apply this knowledge by Friday?

In practice, the most effective teams establish feedback loops between users and leaders.

Dashboards that track usage behavior, sentiment, and business outcomes create real-time visibility, allowing organizations to pivot quickly. In one enterprise, cross-app users—those who used three or more AI applications—reported saving four hours or more per week, a tangible return resulting from consistent iteration and measurement.

The lesson: progress compounds when curiosity becomes a habit. Feedback is no longer a quarterly meeting—it’s a rhythm. Organizations that “patent” the loop don’t just capture lessons; they codify adaptability.

Guard the Human Edge.

The advantage isn’t artificial. It’s human.

Technology can illuminate patterns, but it can’t provide meaning. The best leaders treat AI as radar on the bridge, not the captain at the helm—an extension of human senses, not a replacement for judgment, courage, or empathy.

Across large-scale deployments, the real constraint isn’t computing power—it’s confidence.

Organizations that built trust through hands-on learning and transparent feedback saw adoption accelerate—not because the model improved, but because the people did. Guarding the human edge means investing in understanding before automation and ensuring people trust their tools as much as they trust their teammates.

Protecting that edge also means building systems of trust—psychological safety, transparency, and empowerment—that let teams challenge, test, and refine the machine’s output without fear of reprisal.

The Journey Ahead

On my office bookshelf sits a weathered map of Afghanistan, its districts marked with colored lines tracing JSOC raids through the winter of 2011. I keep it there to remember a simple truth: human intuition and experience still fight wars. The drones that drove our targeting at the time should be thought of in the same way as today’s large language models—guides and accelerants to decision-making, not replacements.

AI is becoming the new electricity: quietly powering everything, differentiating nothing. Your competitive moat will be the speed at which your teams sense, decide, and act together. My colleagues and I call it a Team of Teams: a mesh of trust, clarity, and disciplined autonomy that allows insight to flow to the edge and action to flow back to the center.

Our data from multiple large organizations reinforces this model. Adoption success is directly correlated with network strength and the diversity of collaboration. Employees who spanned functions and geographies were far more likely to sustain multi-app AI use and drive measurable business results.

In short, shared consciousness and empowered execution aren’t abstract ideals; they’re quantifiable performance levers. In the age of AI, the most advanced technology still relies on the oldest competitive advantage: human trust.

It is the modern incarnation of Team of Teams—and the leadership mandate of our age. So the next time a glossy slide deck promises a hundredfold ROI from an algorithm, pause and look around the room.